Case Study: Building a Scalable Real-Time Data Platform with Azure & Databricks

Overview

We've built a robust, real-time data platform leveraging Azure and Databricks that seamlessly ingests streaming events, handles database changes, and delivers AI-powered sentiment analysis. This architecture not only processes data efficiently but also transforms it into actionable business insights, helping organisations make faster, smarter decisions.

For non-technical leaders, think of it as a "data lakehouse" — a unified system that combines the vast storage of a data lake (for handling massive, diverse data) with the structured reliability of a data warehouse (for querying and analysing data at scale). The result? Lower cost, real-time visibility, and AI-driven advantages without the headaches of managing separate tools.

This platform demonstrates how Databricks' lakehouse approach eliminates data silos, reduces operational complexity, and scales effortlessly as your business grows. Businesses using similar setups report:

- Up to 12x faster data processing

- 97% lower total cost of ownership (TCO) by replacing outdated tools

- The ability to handle terabytes of real-time data daily — turning raw information into a competitive edge

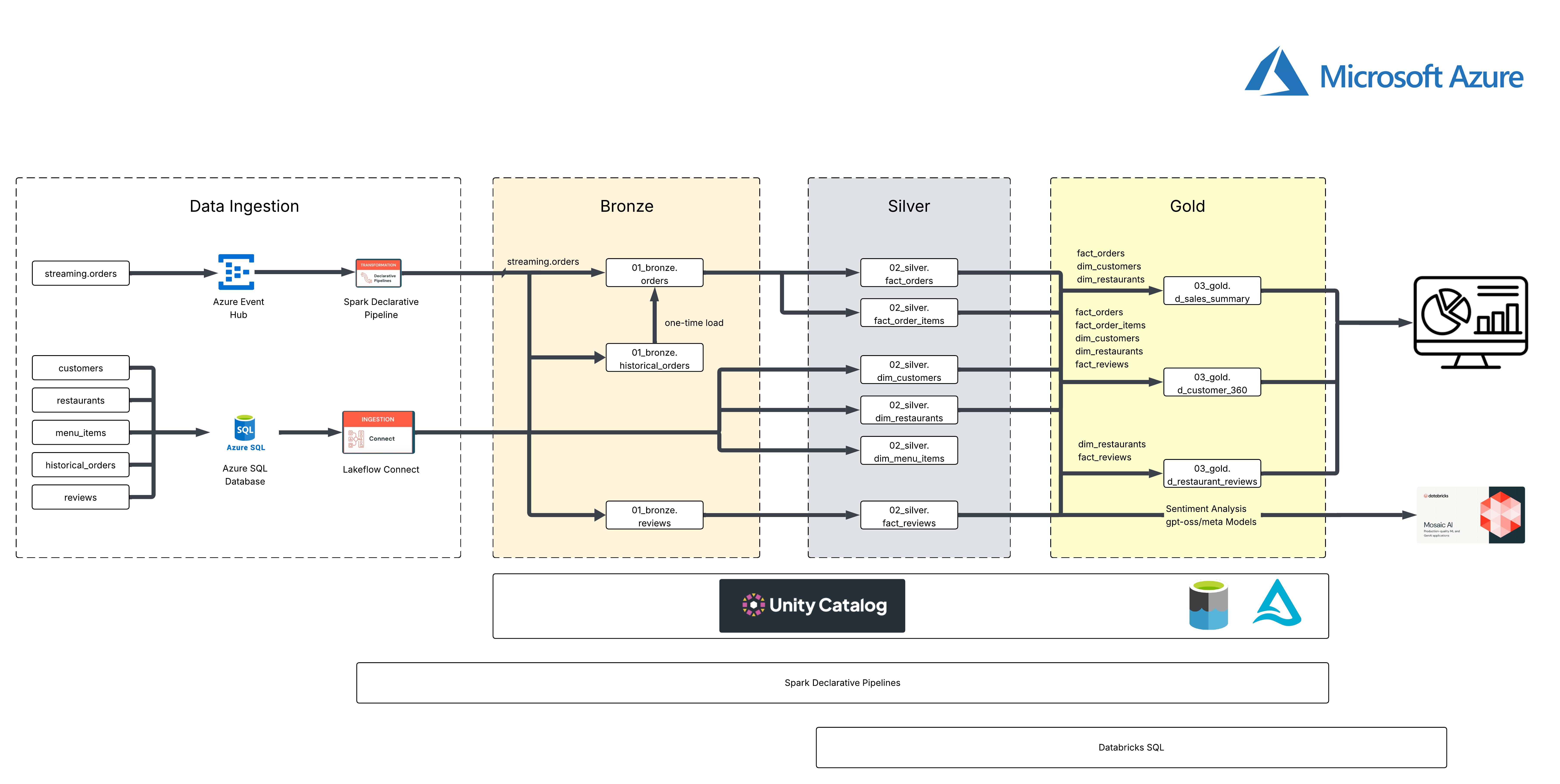

High-level Architecture: From Raw Data to Business Value

To keep things scalable and reliable, the platform follows a clear, layered flow. Here's how it works, with a focus on the benefits for your team.

1. Ingestion: Capturing Data in Real Time

Data flows in from various sources without overwhelming your systems.

- Streaming order events arrive via Azure Event Hubs for low-latency capture.

- Operational changes (like customer updates or menu tweaks) are pulled from Azure SQL Database using Lakeflow Connect for change data capture (CDC) and change tracking (CT).

Business benefit: This incremental approach minimises load on your source systems, enabling near real-time updates. No more waiting hours for batch loads — get fresh data instantly, reducing decision-making delays and improving responsiveness to market changes.

2. Processing: Transforming Data with Medallion Architecture

We use Apache Spark Declarative Pipelines to refine data through three layers:

- Bronze — Raw, unprocessed data stored immutably for easy replay and debugging.

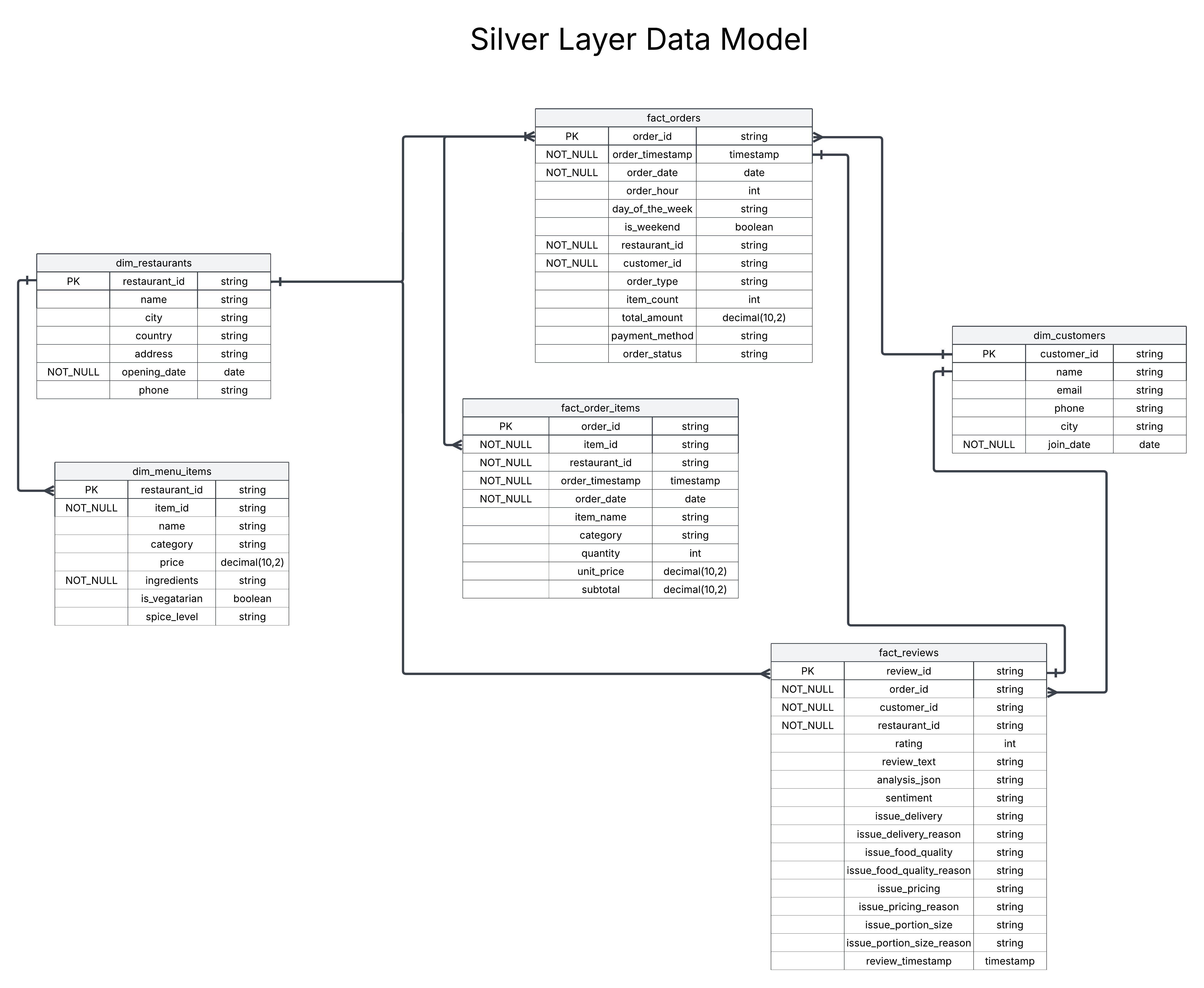

- Silver — Cleaned, validated, and joined datasets that form a reliable core model. This layer structures the data into a star schema for efficient querying, including dimension tables (for entities like restaurants, customers, and menu items) and fact tables (for transactions like orders, order items, and reviews).

- Gold — Aggregated, analytics-ready tables optimised for quick queries.

Business benefit: This structure boosts data quality and pipeline reliability, making debugging straightforward. The Silver layer's modelling creates a clean foundation for BI tools, allowing non-technical users to explore data intuitively. Organisations see faster insights and lower costs by automating optimisations — up to 40–60% savings on ingestion and processing compared to traditional methods.

Silver Layer Data Model

3. Governance: Secure and Centralised Management

Unity Catalog acts as the control centre for all data, providing metadata management, role-based access, lineage tracking, and secure sharing.

Business benefit: No more data chaos or compliance risks. Teams collaborate securely, ensuring everyone works with the most up-to-date, trustworthy data. This enterprise-grade governance simplifies audits and scales with your growth, supporting open standards for future-proofing.

4. Orchestration: Automating for Reliability

Databricks Workflows schedules and manages the entire process — handling dependencies, monitoring, and retries automatically.

Business benefit: Say goodbye to manual interventions. This ensures 24/7 uptime, cuts operational overhead, and lets your team focus on strategy rather than firefighting — leading to more efficient workflows and fewer errors.

5. AI Enrichment: Unlocking Insights with Mosaic AI

We integrate Mosaic AI for advanced analysis on customer reviews — extracting sentiment and identifying issues like delivery problems, food quality concerns, or pricing feedback.

Business benefit: Turns unstructured text into structured intelligence (as seen in the

fact_reviewstable), revealing trends that drive customer satisfaction and revenue. With Databricks' AI-native features, you get faster model development and seamless integration — empowering non-technical users to leverage AI without needing data scientists on every project.

6. Visualisation: Delivering Actionable Insights

Curated data feeds into Databricks SQL dashboards, tracking revenue trends, customer behaviour, restaurant performance, and sentiment.

Business benefit: Business teams get interactive, real-time views without IT bottlenecks. This closes the loop from data to decisions, fostering a data-driven culture and quicker ROI — such as spotting underperforming areas before they impact the bottom line.

Technical Deep Dive: Rigorous Design for Performance

For those interested in the nuts and bolts, here's a closer look:

- Ingestion details: Event Hubs fans out streaming data to Spark consumers with minimal delay. Lakeflow Connect efficiently captures inserts, updates, and deletes from Azure SQL, using Delta MERGE to keep Bronze tables accurate.

- Layered processing: Bronze uses Delta tables for transaction logs and auditing. Silver applies validations and joins to create the star schema model, ensuring data integrity. Gold employs materialised views to precompute joins, slashing query times and compute costs.

- Declarative pipelines: These define transformations in code that is easy to maintain, with built-in incremental processing and lineage for traceability.

- Observability: Includes alerts, schema validation, and logs to catch issues early, ensuring high reliability.

- Scalability: Built on open formats like Delta Lake, this supports ACID transactions (reliable reads/writes) and handles structured and unstructured data natively — ideal for growing from gigabytes to petabytes.

Why a Data Lakehouse Like Databricks?

Databricks isn't just technology — it's a business accelerator. It unifies data storage, processing, analytics, and AI in one platform, eliminating the need for multiple vendors and reducing complexity. Key advantages include:

- Cost efficiency: Pay only for what you use, with automatic optimisations cutting TCO by up to 97% in real-world cases.

- Speed and agility: Real-time analytics mean insights in minutes, not days — perfect for dynamic industries like retail or hospitality.

- AI readiness: Built-in tools make it easy to add machine learning, turning data into predictive power without extra setups.

- Collaboration: Analysts, engineers, and executives work from the same source, fostering innovation and faster delivery.

- Security and compliance: Unified governance ensures data is protected and traceable, meeting enterprise standards effortlessly.

Companies like Insulet (healthcare) achieved 12x faster processing, while retailers use it for real-time sales monitoring — proving its value across sectors.

Addressing Potential Risks

While the benefits of a Databricks lakehouse are substantial, like any enterprise platform there are potential risks to consider. We've designed this solution with best practices to mitigate them, drawing from real-world implementations.

- Security and data breach risks: Databricks operates on a shared responsibility model, where the platform handles core security. We help configure customer-managed keys (CMK), Private Link for secure networking, multi-factor authentication, and row-level security. Unity Catalog centralises access controls and audit logging, reducing breach risks and ensuring compliance with regulations like GDPR or the EU AI Act.

- Cost overruns: We optimise with auto-scaling clusters, Delta Lake partitioning, and caching to cut costs by 40–60%. Pre-aggregated views and monitoring tools prevent surprises, while our architecture expertise ensures predictable budgeting from day one.

- Complexity and learning curve: Databricks provides detailed documentation and a unified interface, but we accelerate adoption with tailored training, hands-on workshops, and phased rollouts — minimising ramp-up time by focusing on your specific use cases.

- Performance and reliability issues: Using materialised views, observability alerts, and cross-region replication ensures high availability and fast performance. We test integrations thoroughly and incorporate retry logic in workflows to handle failures gracefully.

- AI-specific risks: The Databricks AI Security Framework (DASF) includes continuous monitoring, bias detection, and data privacy controls to align with standards like NIST AI RMF. We embed these in your setup with ongoing audits to maintain trust and accuracy.

Why Partner with Us?

We have proven expertise in Azure and Databricks integrations — including sophisticated data modelling like the Silver layer schema shown here — delivering platforms that scale, integrate AI, and drive measurable results such as enhanced customer insights and operational efficiency.

As your partner, we handle the complexity so you can focus on growth. Whether starting small or modernising at scale, we tailor solutions to your needs, backed by best practices and ongoing support.

Ready to unlock your data's potential? Let's connect and discuss how we can build this for you.